The code of ai has evolved from a wild west of experimental tools into a structured discipline with clear standards, responsibilities, and best practices. As AI-powered development tools become standard in production environments, developers need to understand the emerging guidelines that govern how AI-generated code enters real-world applications. This isn't about whether to use AI coding assistants, it's about doing it right.

Understanding the Code of AI Framework

The code of ai represents more than just technical specifications. It encompasses the principles, responsibilities, and workflows that define how developers integrate AI assistance into their development process while maintaining code quality, security, and accountability.

Modern AI coding tools like GitHub Copilot, Claude Code, and similar assistants generate billions of lines of code annually. The Linux community recently established clear policies on AI-generated code, emphasizing that while AI tools are acceptable, human developers bear full responsibility for every contribution. This principle forms the foundation of responsible AI-assisted development.

Core Principles Developers Must Follow

The code of ai starts with accountability. When you ship AI-generated code to production, you own it completely. No disclaimers, no excuses about "the AI made a mistake."

Key responsibilities include:

- Review every line – Never merge AI suggestions without understanding the logic

- Test comprehensively – AI code requires the same testing standards as human-written code

- Document decisions – Explain why you accepted or modified AI suggestions

- Monitor security – AI models can introduce vulnerabilities through pattern replication

- Maintain context – Ensure AI-generated code fits your project's architecture

These aren't optional guidelines. They're the emerging standard for professional development with AI assistance.

Human Accountability in AI Code Generation

The detailed Linux policy discussions reveal a critical aspect of the code of ai: humans take full responsibility for mistakes. This applies whether you're contributing to open source projects or shipping commercial applications.

Think of AI coding assistants as junior developers who work incredibly fast but lack judgment. They can generate syntactically correct code that solves the immediate problem while introducing subtle bugs, security flaws, or architectural inconsistencies. Your job is to catch these issues before they reach production.

| Responsibility Area | Developer Action | AI Tool Role |

|---|---|---|

| Code Quality | Final review and approval | Initial implementation |

| Security Audits | Verify all generated code | Suggest common patterns |

| Bug Accountability | Own all production issues | Assist with debugging |

| Documentation | Maintain accurate records | Generate initial drafts |

| Architecture Decisions | Make final choices | Provide options |

The code of ai demands that developers understand the code they ship. If you can't explain how a function works, you shouldn't commit it. This standard protects codebases from accumulating technical debt disguised as productivity gains.

Implementing Review Processes

Establish a review workflow specifically for AI-generated code. This differs from standard peer review because you're validating not just the solution but the approach itself.

Start by asking these questions for every AI suggestion:

- Does this code follow our architectural patterns?

- Are there security implications I need to verify?

- Will future developers understand this logic?

- Does this introduce dependencies we want to avoid?

- Is this the most maintainable solution?

The code of ai emphasizes understanding over speed. Accepting AI suggestions without review might ship features faster initially, but creates maintenance nightmares later.

Standards for Production AI Code

The code of ai in production environments requires additional rigor beyond development workflows. Research into AI-generated code prevalence and security shows that AI-assisted code already represents a significant portion of new commits in major repositories.

Production standards must address specific risks:

Security verification – AI models learn from public repositories, which include security vulnerabilities. Generated code might replicate these patterns. Run security scans specifically targeting common AI-generated vulnerability patterns.

Performance validation – AI suggestions optimize for correctness, not performance. Profile AI-generated code under realistic load conditions before deploying to production.

Dependency management – AI tools often suggest popular libraries without considering your dependency strategy. Verify that suggested packages align with your security and maintenance policies.

Learning to build production-ready AI applications means understanding these validation steps deeply. Each gate serves a specific purpose in ensuring code quality.

Testing AI-Generated Code

The code of ai requires comprehensive testing strategies. AI-generated code often lacks edge case handling because models optimize for common scenarios they've seen during training.

Develop test suites that specifically target AI-generated logic:

def test_ai_generated_parser():

# Test expected cases

assert parse_user_input("valid input") == expected_output

# Test edge cases AI might miss

assert parse_user_input("") handles_empty_gracefully

assert parse_user_input(None) handles_null_safely

assert parse_user_input("💩🔥") handles_unicode_correctly

# Test security boundaries

assert parse_user_input("'; DROP TABLE users;--") sanitizes_input

Write tests before accepting AI suggestions when possible. This validates that the AI solution meets your actual requirements, not just the simplified version you described in your prompt.

Documentation and Attribution Standards

The code of ai includes specific documentation requirements. Teams need clear records of which code received AI assistance, what prompts generated specific solutions, and what modifications developers made.

This documentation serves multiple purposes. It helps future developers understand the code's origin, provides context for debugging, and creates an audit trail for compliance requirements.

Documentation best practices:

- Note when AI generated initial implementations

- Record the problem description you provided

- Explain significant modifications you made

- Link to relevant design decisions

- Document tested edge cases

Some organizations require formal attribution in commit messages when AI tools contribute significantly to a change. While not universally adopted, this practice increases transparency and helps teams track AI tool effectiveness.

Version Control Strategies

Integrate the code of ai standards into your version control workflow. This means treating AI-generated code commits differently than standard commits in terms of review requirements and documentation.

Consider a commit message template for AI-assisted changes:

feat: Add user authentication middleware

AI-assisted implementation using Claude Code

Modified to align with existing auth patterns

Added rate limiting not in AI suggestion

Verified against OWASP guidelines

Tests: auth.test.js

Security scan: passed

This level of detail helps teams understand code provenance and review decisions.

Emerging Tools and Workflows

The code of ai continues evolving as new tools and workflows emerge. Claude Code represents the latest generation of AI coding assistants, offering more sophisticated context awareness and multi-file editing capabilities.

These advanced tools require updated standards. When an AI assistant can modify multiple files simultaneously, your review process must catch cross-file inconsistencies and unintended side effects.

Modern AI coding tool capabilities:

- Multi-file context and editing

- Architecture-level suggestions

- Automated refactoring

- Test generation

- Documentation creation

Each capability demands specific review protocols. Multi-file changes need integration testing. Architecture suggestions require design review. Automated refactoring needs regression testing.

For developers building practical skills with these tools, specialized training programs like the AI Developer Certification (Mammoth Club) provide hands-on experience with production workflows. Learning to integrate these tools effectively separates developers who simply use AI from those who ship quality AI-assisted applications.

Integration with Development Environments

The code of ai extends into your development environment configuration. Modern IDEs integrate AI assistants deeply, requiring thoughtful configuration to balance productivity with quality.

Configure AI tools to align with your code of ai standards:

| Setting | Recommended Configuration | Reason |

|---|---|---|

| Auto-acceptance | Disabled | Requires explicit review |

| Suggestion frequency | Moderate | Reduces interruption |

| Context scope | Project-aware | Better suggestions |

| Comment generation | Enabled with review | Documents intent |

| Test generation | Enabled separately | Focused workflow |

These configurations enforce the human-in-the-loop principle central to responsible AI-assisted development.

Code Quality Metrics for AI Assistance

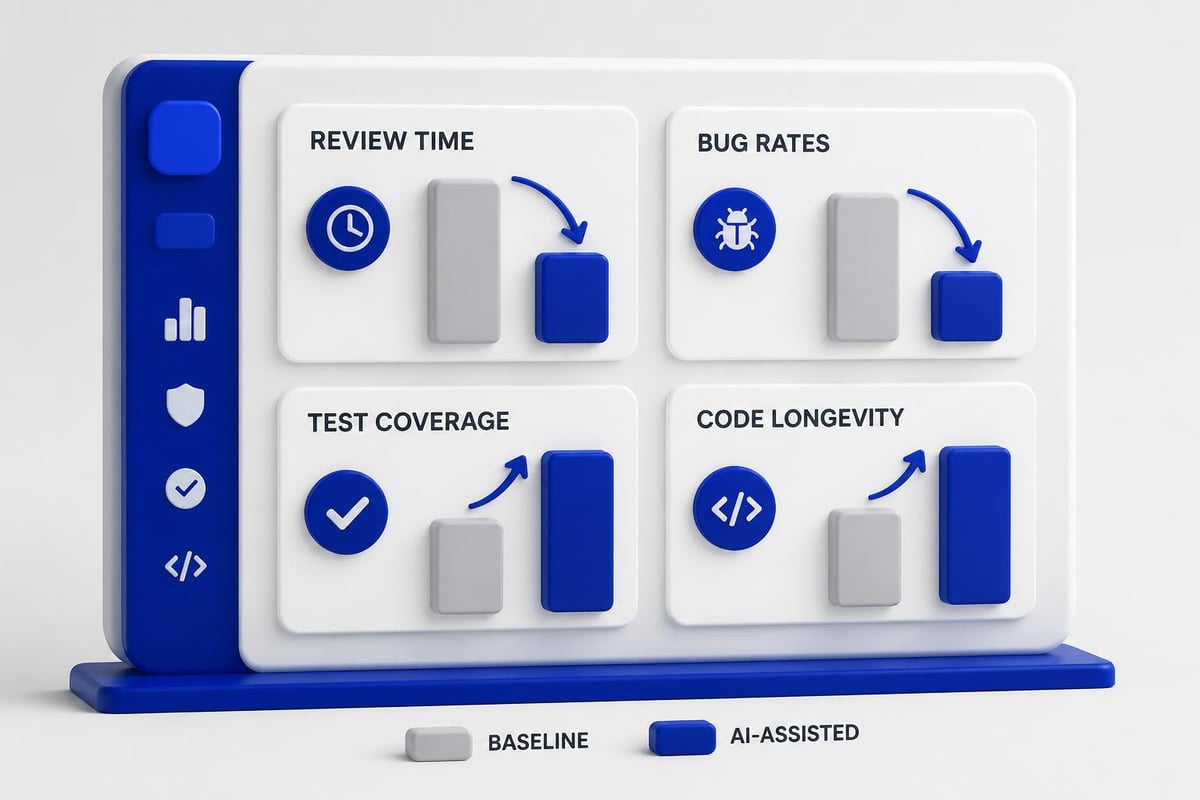

Measuring the impact of AI coding tools requires specific metrics. The code of ai includes standards for tracking both productivity gains and quality implications.

Track these metrics to understand AI tool effectiveness:

- Initial implementation speed – How much faster are first drafts?

- Review cycle length – Does AI code require more review iterations?

- Bug density – Compare AI-assisted vs. human-only code

- Test coverage – Does AI code achieve adequate coverage?

- Maintenance burden – How often does AI code need refactoring?

Research on AI-generated code longevity shows that survival rates vary significantly based on review quality and modification patterns. Code that receives thorough human review persists in repositories at rates comparable to human-written code.

Establishing Quality Baselines

The code of ai requires baseline measurements before deploying AI tools across your team. Without baselines, you can't determine whether AI assistance improves or degrades code quality.

Establish baselines for:

- Average time to implement features

- Bug escape rate to production

- Test coverage percentage

- Code review comment frequency

- Technical debt accumulation

Monitor these metrics after introducing AI tools. Quality should improve or remain constant. If metrics decline, your code of ai standards need refinement.

Training and Team Adoption

Implementing the code of ai across development teams requires structured training. Developers need hands-on experience with AI tools under realistic conditions, not just documentation.

Effective training programs include:

- Tool-specific workshops – Deep dive into specific AI assistants

- Code review sessions – Practice reviewing AI-generated code

- Security awareness – Identify common AI-generated vulnerabilities

- Pair programming – Experienced developers mentor AI tool adoption

- Project retrospectives – Team learns from AI-assisted project outcomes

The practical tutorials available demonstrate real-world implementation patterns that teams can adopt and adapt. Theory matters less than shipping code that works.

Building Internal Guidelines

Your organization's code of ai should extend beyond general principles to specific implementation details relevant to your stack, domain, and compliance requirements.

Document clear guidelines covering:

## AI Code Acceptance Criteria

### Security

- Run static analysis on all AI-generated code

- Verify input validation in user-facing functions

- Check for hardcoded credentials or tokens

- Review dependency licenses

### Performance

- Profile database query patterns

- Verify async/await usage

- Check memory allocation patterns

- Test under realistic load

### Maintainability

- Ensure consistent naming conventions

- Verify error handling completeness

- Check logging and monitoring integration

- Validate documentation accuracy

These guidelines become your team's working definition of acceptable AI assistance.

Future of the Code of AI

The code of ai will continue evolving as AI capabilities expand and more developers integrate these tools into daily workflows. Upcoming challenges include handling AI assistants that can architect entire systems, manage deployments, and optimize infrastructure.

Standards must keep pace with capabilities. As AI tools become more autonomous, human oversight becomes more critical, not less. The principle of developer accountability remains constant even as tools change.

Organizations investing in comprehensive AI coding resources position their teams to adapt to emerging standards and maintain competitive advantages. The code of ai isn't about restricting AI use but about using it responsibly and effectively.

Developers who master these standards will differentiate themselves in the market. Understanding not just how to prompt AI tools but how to validate, integrate, and maintain AI-generated code represents core professional competency in 2026.

The code of ai reflects a maturation of AI-assisted development from experimental tooling to production discipline. Every line of AI-generated code that ships to production should meet the same standards as human-written code, backed by human understanding and accountability.

Mastering the code of ai means shipping better software faster while maintaining quality and security standards. These practices protect your codebase, your users, and your professional reputation. Ready to build production-ready AI applications with confidence? AI Code Central provides the practical tutorials, real-world projects, and technical depth you need to integrate AI tools effectively into your development workflow and stay competitive in modern software development.