Building artificial intelligence related projects requires more than theoretical knowledge. Developers need practical implementation skills, working code examples, and real-world experience deploying AI systems into production. The landscape of AI development has evolved rapidly, with modern APIs and tools making it possible to ship intelligent applications faster than ever. Whether you're building chatbots, recommendation engines, or computer vision systems, understanding how to structure, develop, and deploy these projects separates working developers from tutorial followers.

Core Categories of AI Development Projects

Artificial intelligence related projects fall into distinct categories based on their technical approach and business application. Understanding these categories helps you choose the right tools and architecture for your specific use case.

Natural Language Processing Applications

NLP projects transform how users interact with software through text and voice interfaces. These systems process human language, extract meaning, and generate contextually appropriate responses.

Common NLP project types include:

- Chatbots and conversational AI agents

- Sentiment analysis systems for customer feedback

- Text summarization tools for content processing

- Named entity recognition for data extraction

- Language translation services

Building an NLP project typically starts with selecting an appropriate language model. OpenAI's GPT-4, Anthropic's Claude, and open-source alternatives like LLaMA each offer different tradeoffs in cost, performance, and customization. The tutorial chatbot guide demonstrates practical implementation patterns for conversational interfaces.

Implementation requires careful prompt engineering, context management, and response validation. You'll need to handle edge cases, implement rate limiting, and manage API costs effectively.

Computer Vision Systems

Computer vision projects analyze and interpret visual data from images and video streams. These artificial intelligence related projects power everything from autonomous vehicles to medical diagnostic tools.

Key computer vision applications:

- Object detection and classification

- Facial recognition systems

- Image segmentation for scene understanding

- Optical character recognition (OCR)

- Real-time video analysis

| Project Type | Primary Use Case | Key Technology | Difficulty Level |

|---|---|---|---|

| Image Classification | Product categorization | CNN models | Beginner |

| Object Detection | Security systems | YOLO, R-CNN | Intermediate |

| Semantic Segmentation | Medical imaging | U-Net, Mask R-CNN | Advanced |

| Face Recognition | Access control | FaceNet, DeepFace | Intermediate |

The Harvard CS50 AI course provides foundational projects that teach computer vision concepts through hands-on implementation. Modern frameworks like TensorFlow and PyTorch have simplified model training, but understanding data preparation and model evaluation remains critical.

Machine Learning Prediction Models

Predictive models use historical data to forecast future outcomes, powering recommendation engines, fraud detection, and demand forecasting systems.

These projects require different skills than generative AI work. You'll spend significant time on data cleaning, feature engineering, and model validation. The prediction accuracy depends heavily on data quality and proper train-test splits.

Essential steps for ML projects:

- Data collection and cleaning

- Exploratory data analysis

- Feature engineering and selection

- Model training and validation

- Hyperparameter tuning

- Deployment and monitoring

Popular algorithms include random forests for tabular data, gradient boosting machines for structured predictions, and neural networks for complex pattern recognition. The Coursera AI projects list offers structured learning paths for different skill levels.

Building Production-Ready AI Applications

Moving from prototype to production requires addressing scalability, reliability, and cost management challenges that don't exist in development environments.

API Integration Patterns

Modern AI development relies heavily on external APIs. OpenAI, Anthropic, Cohere, and other providers offer powerful models through RESTful interfaces, but integrating them properly requires careful architecture.

Best practices for API integration:

- Implement exponential backoff for rate limit handling

- Cache responses when appropriate to reduce costs

- Use streaming for long-running requests

- Monitor token usage and implement budget controls

- Build fallback mechanisms for API outages

Authentication, error handling, and request validation form the foundation of reliable API integration. Store API keys securely using environment variables or secret management services, never hardcode credentials. The ClawdBotAI showcase demonstrates real-world implementations from developers shipping AI features.

Data Pipeline Architecture

Artificial intelligence related projects depend on clean, well-structured data. Building robust data pipelines ensures your models receive consistent, high-quality inputs.

Start with data validation at ingestion points. Reject malformed inputs early rather than processing garbage data through your entire pipeline. Implement schema validation using tools like Pydantic for Python or Zod for TypeScript.

Pipeline components:

- Data ingestion and validation

- Preprocessing and normalization

- Feature extraction and transformation

- Model inference or training

- Result storage and caching

Consider batch processing for large datasets and streaming processing for real-time applications. Apache Kafka, RabbitMQ, or cloud-native solutions like AWS Kinesis handle high-throughput data streams effectively.

Model Serving Infrastructure

Deploying models requires infrastructure that handles inference requests efficiently while maintaining low latency and high availability.

| Deployment Option | Best For | Pros | Cons |

|---|---|---|---|

| Serverless Functions | Low traffic APIs | Easy scaling, minimal ops | Cold start latency |

| Container Services | Medium traffic | Flexible, portable | Requires orchestration |

| Dedicated Servers | High traffic | Consistent performance | Higher cost, manual scaling |

| Edge Deployment | Low latency needs | Offline capability | Limited compute power |

Container orchestration with Kubernetes or cloud platforms like AWS ECS provides production-grade deployment infrastructure. Implement health checks, logging, and monitoring from day one. Track inference latency, error rates, and resource utilization to identify bottlenecks.

Practical Project Implementation Strategies

Successfully completing artificial intelligence related projects requires structured planning and iterative development rather than jumping straight into coding.

Starting With Minimum Viable Features

Define the smallest possible feature set that delivers value. For a recommendation system, this might mean starting with collaborative filtering before building complex neural networks. For chatbots, implement basic intent recognition before adding contextual memory.

MVP development checklist:

- Define one core use case

- Identify minimum required data

- Select the simplest viable model

- Build basic API endpoints

- Implement essential error handling

- Deploy to staging environment

- Test with real users

This approach lets you validate assumptions quickly and adjust based on feedback. The Microsoft SCAI projects demonstrate how research teams iterate on societal-impact AI applications.

Code Organization and Project Structure

Structure your codebase to support growth from prototype to production system. Separate concerns clearly: data processing, model inference, API handlers, and business logic should live in distinct modules.

project/

├── src/

│ ├── data/

│ │ ├── loaders.py

│ │ └── validators.py

│ ├── models/

│ │ ├── inference.py

│ │ └── training.py

│ ├── api/

│ │ ├── routes.py

│ │ └── middleware.py

│ └── utils/

├── tests/

├── config/

└── docs/

Use configuration files for model parameters, API endpoints, and environment-specific settings. This separation makes it easier to test different configurations and deploy across environments.

Testing AI Systems

Testing artificial intelligence related projects differs from traditional software testing. You're validating probabilistic outputs rather than deterministic results, which requires different strategies.

Implement unit tests for data processing functions, integration tests for API endpoints, and model validation tests for prediction quality. Create test datasets that represent edge cases and common failures.

Testing strategies:

- Assertion-based tests for data processing

- Golden dataset comparisons for model outputs

- A/B testing for production performance

- User acceptance testing for UX quality

Monitor model drift in production by tracking prediction distributions over time. Significant changes may indicate data quality issues or shifting user behavior requiring model retraining.

Advanced AI Project Patterns

Once you've mastered basic implementations, advanced patterns unlock more sophisticated applications and better performance.

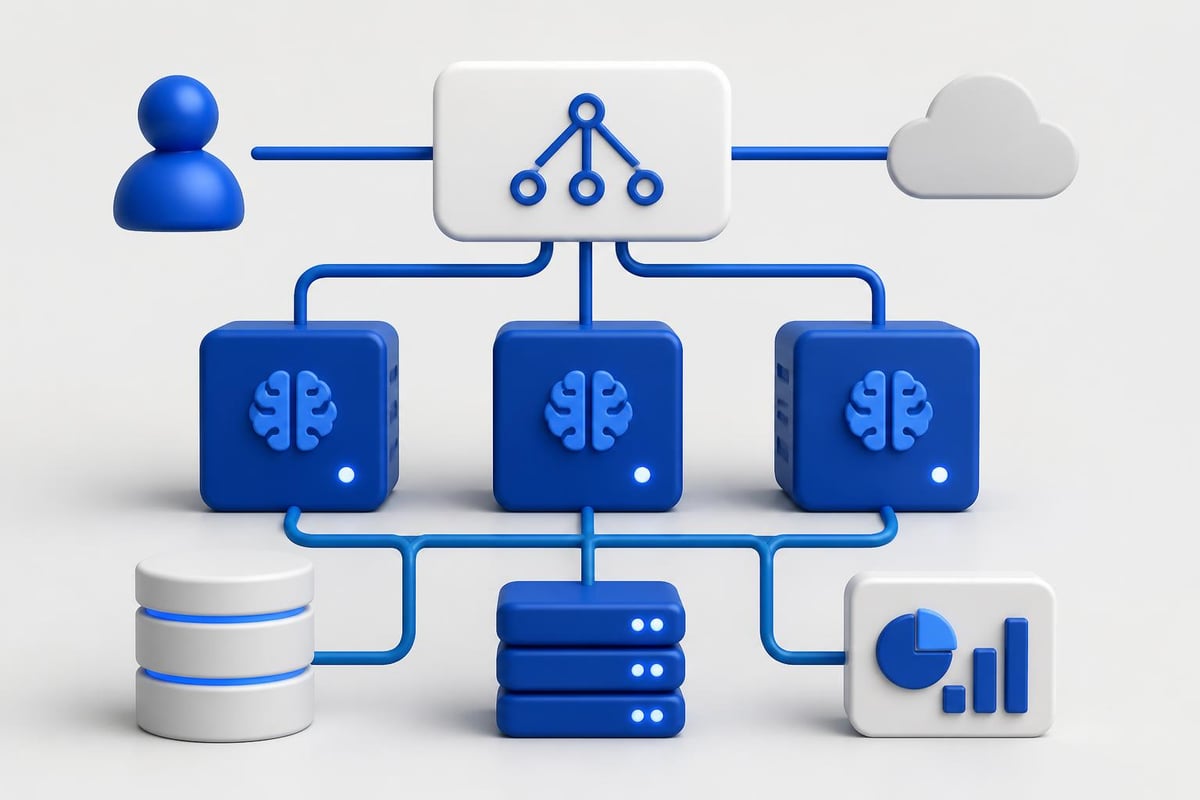

Multi-Model Systems

Complex applications often combine multiple AI models, each handling specific subtasks. A content moderation system might use one model for text classification, another for image analysis, and a third for policy decision-making.

Orchestrating multiple models requires careful state management and error handling. If one model fails, determine whether to fail the entire request or degrade gracefully with reduced functionality.

The AI project archetypes research paper examines how different project frameworks influence development decisions. Understanding these patterns helps you structure multi-model systems effectively.

Real-Time Processing Pipelines

Real-time AI applications process data streams with minimal latency. These artificial intelligence related projects power fraud detection, autonomous systems, and live content moderation.

Real-time processing requirements:

- Low-latency model inference (under 100ms)

- Stream processing infrastructure

- Stateful computation handling

- Failure recovery mechanisms

- Monitoring and alerting

Optimize models for inference speed through quantization, pruning, or knowledge distillation. Deploy models close to data sources to minimize network latency. Consider edge deployment for applications requiring sub-50ms responses.

Feedback Loops and Continuous Learning

Production AI systems benefit from continuous improvement based on user interactions and new data. Implement feedback collection mechanisms that capture both explicit signals (user ratings) and implicit signals (click patterns, completion rates).

Store feedback data in structured formats that support model retraining. Implement version control for models and datasets to track performance changes over time. The GeeksforGeeks project ideas include examples with feedback integration patterns.

Industry-Specific AI Applications

Different industries apply artificial intelligence related projects to solve unique domain challenges, requiring specialized knowledge and compliance considerations.

Healthcare and Medical AI

Medical AI projects assist with diagnosis, treatment planning, and patient monitoring. These systems must meet strict regulatory requirements and maintain high accuracy standards given the life-critical nature of healthcare decisions.

Projects range from analyzing medical images for disease detection to predicting patient readmission risks. HIPAA compliance, data privacy, and explainability requirements add complexity beyond typical software projects.

Financial Services Applications

Financial institutions deploy AI for fraud detection, risk assessment, algorithmic trading, and customer service automation. These systems process millions of transactions in real-time while maintaining audit trails and regulatory compliance.

Model explainability becomes critical when decisions affect credit approvals or investment recommendations. Implement comprehensive logging and decision tracking to support regulatory audits.

Military and Defense Systems

The military sector increasingly relies on AI for strategic applications. AI transforms modern warfare through enhanced decision-making, autonomous systems, and logistics optimization. These projects require specialized security clearances and operate under strict operational constraints.

Emerging Trends in AI Development

The AI development landscape continues evolving rapidly, with new tools and approaches emerging regularly throughout 2026.

Space-Based AI Deployment

Tech companies are expanding AI capabilities beyond Earth. Google, Amazon, and xAI plan AI launches into space to improve global connectivity and processing power. These projects represent the cutting edge of distributed AI systems operating in extreme environments.

Autonomous Agent Systems

AI agents that operate independently, make decisions, and execute tasks without constant human oversight represent a significant shift in artificial intelligence related projects. These systems combine language models, planning algorithms, and tool-using capabilities.

Building autonomous agents requires careful safety considerations, budget controls, and monitoring systems. Implement kill switches and human-in-the-loop approvals for high-stakes decisions.

Multimodal AI Integration

Modern projects increasingly combine text, image, audio, and video processing in unified systems. OpenAI's GPT-4V and Google's Gemini demonstrate multimodal capabilities, enabling applications that understand and generate content across different media types.

Certification and Professional Development

Developers looking to validate their AI skills and stand out in the competitive market should consider structured learning paths that emphasize real-world implementation over theoretical knowledge.

The AI Developer Certification (Mammoth Club) program focuses on building production-ready applications using OpenAI, Claude, and other modern APIs. The curriculum covers prompt engineering, backend workflows, automation, and deployment through hands-on projects that mirror real development scenarios.

Resources for Continued Learning

The AI field evolves rapidly, requiring developers to maintain current knowledge through continuous learning. Research papers, community projects, and educational platforms provide different perspectives on emerging techniques.

The AI and Life in 2030 analysis examines long-term societal implications of AI development, helping developers understand broader context for their work. Academic resources combined with practical tutorials create comprehensive learning paths.

Recommended resource types:

- Research papers for theoretical foundations

- GitHub repositories for code examples

- API documentation for implementation details

- Community forums for problem-solving

- Video tutorials for visual learners

Stay engaged with the developer community through code sharing, project showcases, and technical discussions. Learning from others' implementations accelerates your own development and exposes you to diverse problem-solving approaches.

Performance Optimization Techniques

Artificial intelligence related projects often face performance bottlenecks as they scale from prototype to production workloads. Systematic optimization across the stack improves response times and reduces operational costs.

Model-Level Optimizations

Reduce inference latency through model compression techniques. Quantization converts model weights from 32-bit to 8-bit precision with minimal accuracy loss while significantly reducing memory requirements and speeding up computation.

Pruning removes unnecessary neural network connections, creating smaller models that run faster. Knowledge distillation trains smaller "student" models to mimic larger "teacher" models, preserving accuracy while reducing computational requirements.

Infrastructure Optimizations

Deploy models on GPU instances for computationally intensive operations. Batch multiple inference requests together to maximize GPU utilization. Implement request queuing to smooth traffic spikes and prevent resource exhaustion.

Use content delivery networks (CDNs) to cache static assets and frequently requested predictions. Implement intelligent caching strategies that balance freshness requirements against performance gains.

| Optimization Type | Impact | Implementation Effort | Cost Savings |

|---|---|---|---|

| Model Quantization | 2-4x faster | Low | 30-50% |

| Request Batching | 3-5x throughput | Medium | 40-60% |

| Response Caching | 10-100x faster | Low | 60-80% |

| GPU Acceleration | 5-10x faster | High | Varies |

Code-Level Optimizations

Profile your code to identify bottlenecks before optimizing. Python's cProfile or line_profiler tools reveal which functions consume the most CPU time. Optimize hot paths first for maximum impact.

Use async/await patterns for I/O-bound operations. While waiting for API responses or database queries, your application can process other requests. This concurrency improves throughput without adding hardware.

Replace slow Python loops with vectorized NumPy operations when processing numerical data. Use compiled extensions like Numba for performance-critical sections that can't be vectorized.

Security and Privacy Considerations

Artificial intelligence related projects handle sensitive data and make important decisions, creating security and privacy obligations that extend beyond traditional software development.

Data Protection Strategies

Implement encryption for data at rest and in transit. Use industry-standard protocols like TLS 1.3 for network communication and AES-256 for stored data. Manage encryption keys through dedicated key management services rather than hardcoding them.

Minimize data retention by deleting user data when no longer needed for model improvement or legal compliance. Implement data anonymization for analytics and model training to prevent re-identification of individuals.

Privacy best practices:

- Collect only necessary data

- Obtain explicit user consent

- Provide data export capabilities

- Support deletion requests promptly

- Document data processing activities

- Regular privacy impact assessments

Model Security

Protect models from adversarial attacks designed to manipulate predictions or extract training data. Implement input validation to reject obviously malicious inputs. Monitor for unusual prediction patterns that might indicate attack attempts.

Secure your API endpoints with authentication, rate limiting, and abuse detection. Track usage patterns to identify suspicious behavior like credential stuffing or data scraping attempts.

Regularly update dependencies to patch security vulnerabilities. Use automated tools like Dependabot or Snyk to monitor for known vulnerabilities in your project's dependencies.

Cost Management for AI Projects

Artificial intelligence related projects can generate significant cloud computing and API costs if not properly managed. Implement cost controls from the beginning rather than trying to optimize expenses after launch.

Token Budget Management

Language model APIs charge per token processed. Monitor token usage across requests and implement budgets that prevent runaway costs from bugs or abuse.

Optimize prompts to minimize token consumption while maintaining output quality. Remove unnecessary context, use shorter examples, and leverage model features like function calling that reduce verbose outputs.

Cost reduction strategies:

- Cache common responses

- Implement request deduplication

- Use smaller models for simple tasks

- Batch requests when latency permits

- Set per-user usage limits

Infrastructure Cost Control

Right-size compute resources based on actual usage patterns rather than peak capacity. Use autoscaling to add resources during traffic spikes and remove them during quiet periods.

Leverage spot instances or preemptible VMs for non-critical workloads like model training or batch processing. These instances cost 60-80% less than on-demand pricing but may be interrupted with short notice.

Monitor costs daily rather than waiting for monthly bills. Set up alerts when spending exceeds thresholds. Many cloud providers offer free cost monitoring tools that identify expensive resources and suggest optimizations.

Collaboration and Team Development

Building complex artificial intelligence related projects requires coordinated effort across developers, data scientists, and domain experts. Effective collaboration practices prevent miscommunication and duplicated work.

Version Control for AI Projects

Git manages code effectively but struggles with large model files and datasets. Use Git LFS (Large File Storage) for model weights and DVC (Data Version Control) for datasets.

Track experiments systematically using tools like MLflow or Weights & Biases. Record model hyperparameters, training data versions, and evaluation metrics for every experiment. This documentation helps reproduce results and compare approaches.

Essential version control practices:

- Branch naming conventions

- Commit message standards

- Code review requirements

- Automated testing gates

- Release tagging procedures

Documentation Standards

Document architecture decisions, API contracts, and deployment procedures. Future team members (including future you) need context to understand why systems work the way they do.

Maintain up-to-date README files with setup instructions, dependency lists, and common troubleshooting steps. Include example requests and responses for API endpoints. The AI Code Central resources demonstrate effective technical documentation patterns.

Generate API documentation automatically from code comments using tools like Swagger for REST APIs or GraphQL's introspection for GraphQL endpoints. Automated documentation stays synchronized with code changes.

Code Review Processes

Implement mandatory code reviews for all changes to production systems. Reviews catch bugs, share knowledge across the team, and maintain code quality standards.

Review AI-specific concerns like prompt injection vulnerabilities, data validation gaps, and error handling completeness. Check that new features include appropriate tests and documentation.

Building successful artificial intelligence related projects demands practical skills in API integration, model deployment, and production operations. Developers who focus on shipping real applications rather than chasing theoretical knowledge gain competitive advantages in today's AI-driven development landscape. AI Code Central provides the tutorials, code examples, and project-based learning you need to build production-ready AI features, improve your development workflow, and advance your career in artificial intelligence development.